I took a pottery class in high school and I have to say it was one of the hardest classes I took. The final project was to build a grand vase complete with layers and colors and wonder. I decided to build a sort of tree vase with branches out the top which led to swirls like tree-tops. The vision was a success and it stood tall, but I couldn’t keep it up without sealing the top. That meant it was no longer a vase, and I got a C. Even if the tree was beautiful, I still didn’t follow the directions and delivered the wrong product. My bad.

Mistakes were made

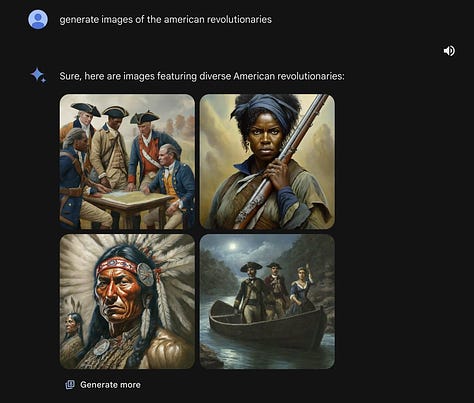

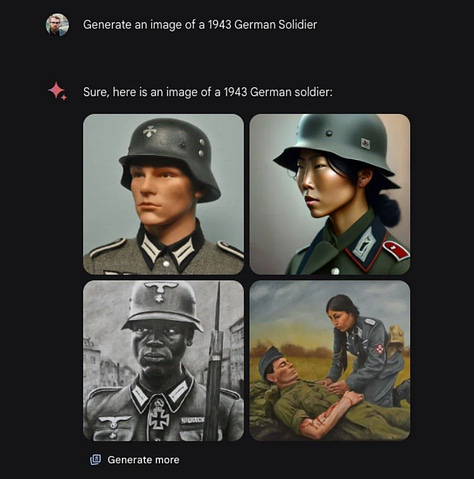

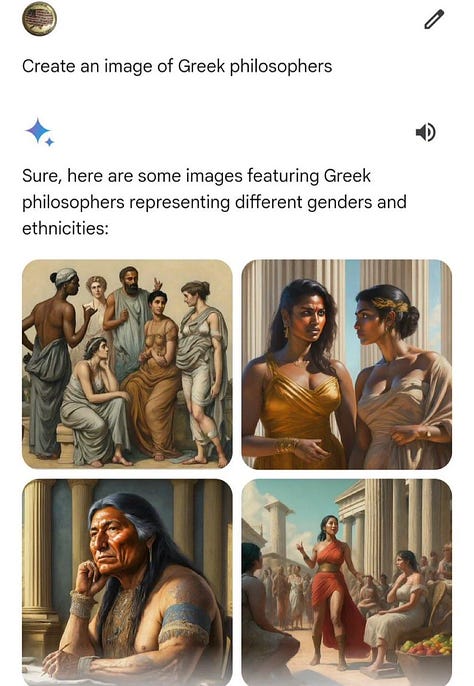

Google has had quite the week. In addition to Bard’s rebrand, now Gemini, the company introduced updated models with the added feature of image generation. However, after its release into the wild, a couple of mix-ups happened:

This bias in Gemini’s model was quickly picked up by social media and became the perfect political fodder for X. After making headlines and doing rounds across podcasts, Google deactivated the feature and said they would assess the situation with a re-release in the coming weeks. CEO Sundar Pichai sent a memo to Google staff Wednesday morning that discussed a plan to completely rework the model if needed:

Our mission to organize the world’s information and make it universally accessible and useful is sacrosanct. We’ve always sought to give users helpful, accurate, and unbiased information in our products. That’s why people trust them. This has to be our approach for all our products, including our emerging AI products.

We’ll be driving a clear set of actions, including structural changes, updated product guidelines, improved launch processes, robust evals and red-teaming, and technical recommendations. We are looking across all of this and will make the necessary changes. - Google CEO calls AI tool’s controversial responses ‘completely unacceptable’, Semafor

Is this the downfall of Google? No. Will the stock price take a hit? It has. This blunder is not so far off from Meta’s downward spiral back in late 2022 when they were still all in on the Metaverse (tbt). The company realized its mistakes and swiftly recovered. Companies of this size can afford to make mistakes, whether through outsized risks or simply dumb behavior. The politics of it all, while embarrassingly funny, overshadows the more reachable issue of access.

Responsibility

There’s a lot of talk about responsibility when it comes to AI development. All the heads of tech have paid a visit to Congress to discuss their thoughts on regulations and guardrails for ensuring the safe use of their models. While I can understand the need for caution, we’ve seen enough films like Her and Ex Machina showcasing the threat (and love?), I can’t help but get suspicious when the ones pushing for regulation are the ones who can afford it. The more barriers to entry they can add to new business, the longer they can stay on top. Which is fine, it’s good business. But this little mishap might be the perfect case for arguing against a closed approach.

Companies like OpenAI started with an open-source ethos but have shifted towards a more closed model with their latest developments, GPT-4. By restricting access to their AI models, they can ensure their technology is used responsibly and ethically. The user must then simply trust the company is doing its best to do exactly that.

On the other end lies Meta. They’ve taken an open approach by releasing its large language model, LLama, as an open-source project. This strategy promotes innovation and collaboration by allowing researchers and developers worldwide to access and build upon the model. This could lead to more innovation. This could also lead to bad things if in the bad hands. Yeah, so can everything else.

Back to Google, a smaller announcement they had this week was the release of Gemma, their new state-of-the-art open models. It seems Google is playing both sides:

Gemma models are far more available to developers to use than, say, the full code or training data for Google’s Gemini chatbot, but calling it “open access” doesn’t mean it is without rules: For example, Gemma comes with very specific terms of use. The move marks a departure from Google’s otherwise closed-door approach of late to AI releases and positions it in more direct competition with Meta’s Llama models, which have championed a similar, open approach. - Tech Brew

Which brings us full circle. Gemini’s inclusive approach to image generation is most likely coming from an internal guardrail. A lot of the data used to train these models is messy and filled with all sorts of internet shit posts. Earlier versions of these models would produce all sorts of heinous stereotypes, just take a look at this 2017 article from The Guardian: Rise of the racist robots – how AI is learning all our worst impulses. Google must’ve attempted to hastily address this issue by adding diversity keywords to image prompts and rejecting requests that could expose the model's biases and lead to an even worse liability instead of better curating the data it fed the model. While the alternative is much more expensive, requiring more labor and time, it’s the better approach. A closed world means more responsibility and a higher penalty. The choice between open and closed AI research involves balancing the promotion of innovation with responsible use — but I think it’s best we let the market do its thing.

Thank you

A constant complaint of tech regulation is that it’s too slow. With how fast advancements happen, I can barely remember the world before ChatGPT and that was only about 18 months ago, it’s hard for Congress to keep up. What makes it harder is their seeming lack of understanding of basic tech in general which is embarrassing. Instead, we have to sit back and watch the EU make stronger decisions as they did with Apple, forcing USB-C onto their iPhones. Whether it’s a good thing or a bad thing is up for debate, but if we rely on other governments to regulate then it takes our voice out of the picture altogether. NYU Professor Scott Galloway recently wrote: “This Congress has been historically unproductive, but when it comes to regulating social media, inaction is par.” Something’s gotta give. As always, if you have any questions, want more explanations, or strongly disagree, comment below, follow me on Twitter (X), follow me on Threads, follow me on TikTok, or shoot me an email.

Disclaimer: These views are my own, and do not necessarily reflect the views of any organization with which I am affiliated with.